How to Test LLM-Based Apps Like an AI Chatbot

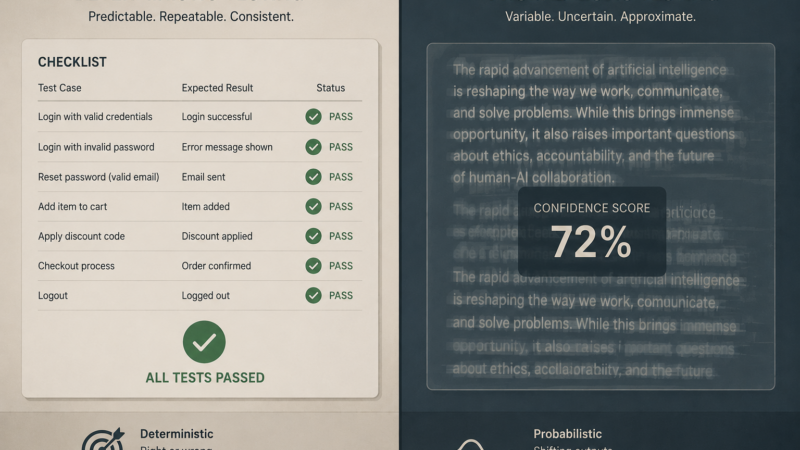

There’s a common belief that testing LLM-based apps is fundamentally different from testing traditional software — that because outputs are non-deterministic, the whole testing playbook needs to be thrown out. This is not entirely true, and believing it should not be an excuse to skip testing altogether.

Yes, an LLM won’t return the exact same string every time. But the intent of a correct answer doesn’t change. If a user asks “what’s the return policy?”, the expected result is still well-defined: the response should mention the return window, be accurate to your policy docs, and not hallucinate a number. The exact phrasing will vary. That’s fine. We’re not testing phrasing — we’re testing correctness.

The real difference isn’t that expected outputs don’t exist. It’s that a human — or another LLM — has to be the judge rather than a simple assertEqual. And because outputs are probabilistic, a single run tells you almost nothing. You need to sample multiple times and measure a pass rate.

Once you internalize those two shifts, everything else is familiar territory.

The Two Adjustments

1. Judgment replaces string matching

In traditional testing you write:

assert response == "Your return window is 30 days."

In LLM testing you write a rubric instead:

"Does the response correctly state the return policy without adding false information?"

The test case still has an expected result. You’ve just moved from matching strings to evaluating intent.

You can do this manually — read the output, decide if it passes your rubric. Or you can feed the rubric to another LLM and let it judge. I’ve been doing it manually for a while now, and the LLM-as-judge approach is the logical next step — same process, just automated. I’ve been moving in that direction building my own orchestration prototype, and I’m confident it works.

One caveat: for structural outputs — JSON, dates, lists — skip the judgment entirely and use traditional assertions. If you asked for JSON, parse it and check the schema. Save the rubric for outputs where meaning matters more than format.

def passes_rubric(question: str, response: str, rubric: str) -> bool:

prompt = f"""

Question: {question}

Response: {response}

Rubric: {rubric}

Does the response meet the rubric? Answer only YES or NO.

"""

return llm(prompt).strip().upper() == "YES"

2. Pass rate replaces pass/fail

A single test run is a coin flip. This is the part I learned the hard way.

I’ve been using NotebookLM to build and test my own prompts — versioning them, running test cases, checking whether each section returns the right kind of output. Not exact words, just the right class of response and the right formatting. It worked fine for ten runs. Then the eleventh example broke the formatting.

That’s not a fluke. That’s the nature of probabilistic outputs. One pass doesn’t mean it works. One fail doesn’t mean it’s broken. You need enough samples to see the real picture.

Run each test case 10–20 times. Measure the pass rate. Then decide what threshold you’re comfortable shipping at. For a customer-facing chatbot, maybe 95% isn’t good enough. For an internal tool, 80% might be fine. That’s a product decision — but you can only make it if you’re measuring.

def eval_with_pass_rate(test_case, n=20):

results = [run_and_judge(test_case) for _ in range(n)]

pass_rate = sum(results) / n

return pass_rate

# Example output:

# "What's the return policy?" → 18/20 passed (90%)

This also gives you something to compare against. Did the new prompt improve things? Run the eval suite before and after. Compare pass rates. That’s real signal — not a vibe check.

It’s Still Just Testing

At the end of the day, you have inputs and you have expected outputs. That hasn’t changed. You still need coverage. You still need to think through flows, edge cases, and what happens when a user does something you didn’t anticipate. You still write test cases. You still ask “what should this do, and did it do it?”

The mechanics of evaluation look different — rubrics instead of assertions, pass rates instead of pass/fail. But the thinking behind them is the same thinking you’ve always done. If you’ve been testing software for any length of time, you already know how to do this. You just need those two calibrations.